(and what you should know before you choose one)

When it comes to QA, AI-powered automated testing tools promise more speed, better coverage, and lower maintenance. But they don’t all solve the same problems, and their approach to solving problems can be fundamentally different.

Some platforms lean heavily into autonomy. Others focus primarily on speed or aggressive self-healing. A smaller group applies AI in specific parts of the workflow while preserving test execution reliability and human control.

These differences matter.

In this comparison, we’re looking at AI-assisted automated testing tools for QA. These are platforms that use AI to speed up test creation, reduce maintenance, and improve signal when things change. Some are optimized for SMBs and fast-moving startups that need coverage quickly without building a dedicated QA engineering org. Others are built with enterprise governance, scale, and auditability in mind. And several sit somewhere in between, serving both, depending on how much structure, oversight, and customization a team requires. What they all share is a goal of reducing brittle tests, manual effort, and maintenance drag, even if they take very different paths to get there.

AI can dramatically reduce manual work in QA. It can generate tests, adapt to UI changes, and accelerate triage. But AI layered onto brittle foundations often accelerates noise instead of reducing it. And AI that hides its decisions can quietly erode trust in your release signals.

To make sense of the current AI QA landscape, it helps to look past marketing language and understand how each platform actually uses AI, what tradeoffs are in play, and what types of companies, teams, and values/trade-offs each tool is best suited for.

The comparisons below break down different approaches to AI and automated testing for QA so you can see where each tool shines, what may make it less ideal for you, and which approach to QA aligns best with how your team thinks about quality.

Let’s jump in.

1. Rainforest QA

Rainforest QA is an AI-accelerated test automation platform built specifically for end-to-end (E2E) web application testing. Rainforest can also test browser extensions.

Rather than layering generative AI on top of brittle, code-based frameworks, Rainforest is a no-code QA automation platform with AI thoughtfully embedded into test creation, execution, and maintenance.

Rainforest’s platform includes everything you need for the automated testing workflow:

- AI-generated recommendations on what to test

- No-code test creation and test planning tools

- Self-healing and maintenance workflows

- Cloud-based infrastructure for running tests massively in parallel

- Detailed, human-readable test results, including video recordings

- Integrations with all major CI/CD platforms, Slack, Microsoft Teams, and JIRA

- Full access to virtual machines (no headless browser)

Not only does Rainforest use AI for test creation and self-healing, but it also applies AI throughout the testing lifecycle to reduce upfront test suite creation work, brittleness, and long-term maintenance costs.

Rainforest uses a patent-pending AI approach for higher reliability

Unlike tools that rely primarily on off-the-shelf LLMs at runtime, Rainforest’s AI uses a patent-pending approach that improves accuracy and consistency in test creation and maintenance while preserving deterministic execution.

Rainforest’s AI helps build and maintain tests. It does not reinterpret your application unpredictably on every run. The results of automated testing are release signals, and release signals need to be predictable.

Rainforest avoids test brittleness by design

Automated testing that frequently fails due to minor changes in your app has a name: “brittle tests.” Brittle tests are one of the biggest sources of wasted time in automated testing, and “self-healing” alone doesn’t fix that if the underlying model is fragile.

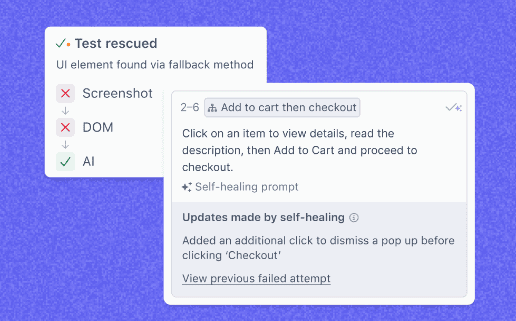

Tests in Rainforest are more resilient because they use multiple signals to identify elements, including:

- Visual appearance

- Automatically-identified DOM selectors

- AI-generated element descriptions

A change in any one of these identifiers won’t break your test, which dramatically reduces both false failures and test maintenance work. When changes are intentional, Rainforest’s AI proposes updates transparently rather than silently passing failures. Teams can see what changed, why it changed, and decide whether to accept it.

Rainforest’s no-code execution enables real speed

If you’re looking into AI QA and automated testing tools, you’re probably trying to increase software delivery speed, not just generate tests faster.

Rainforest’s no-code framework allows anyone on the team (technical or not) to understand, review, and update tests without specialized training. As AI lowers the barrier to creating tests, clarity becomes more important, not less. Rainforest keeps tests readable and reviewable, not buried in an abstraction layer you can’t audit.

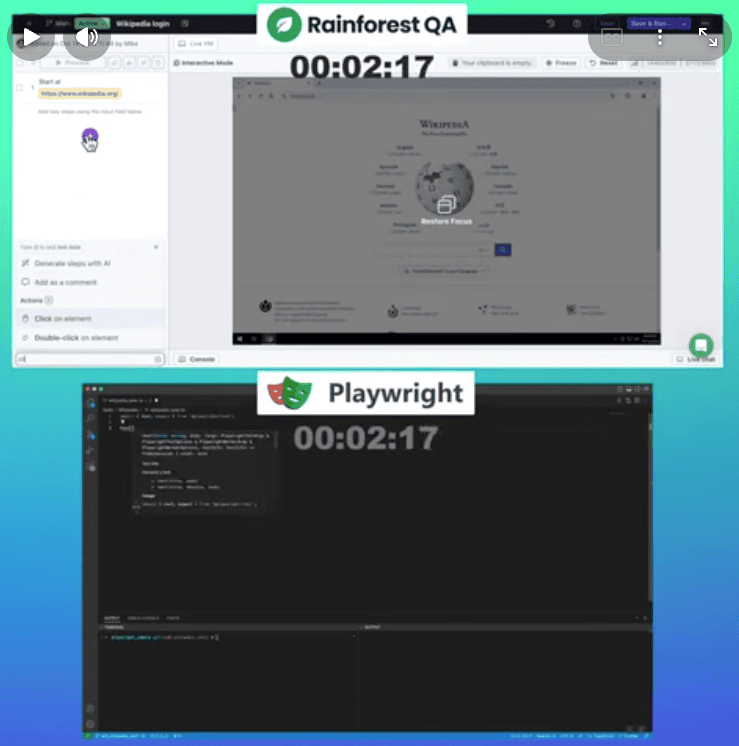

Our survey data shows that using Rainforest’s AI-accelerated, no-code platform is up to three times faster than using open-source frameworks for creating and maintaining end-to-end tests.

You can see the difference for yourself in this video, which shows the same test being created side-by-side in Rainforest and in Playwright:

AI handles the grunt work; humans decide what matters

Rainforest is built around a simple principle: AI should automate repetitive, low-leverage work, while humans focus on prioritization and judgment.

AI can recommend and generate tests, adapt to UI changes, and maintain coverage over time. Humans should decide which flows matter, which failures are release-blocking, and where quality assurance investment is most valuable. As AI makes it easier to create and maintain tests, human judgment becomes more important than ever.

This balance allows teams to move faster without sacrificing confidence in their test results.

Choose this if: You want AI to accelerate test creation and maintenance while preserving deterministic execution, transparent results, and human control over release decisions.

Want to learn more about Rainforest and see it in action?

2. Mabl

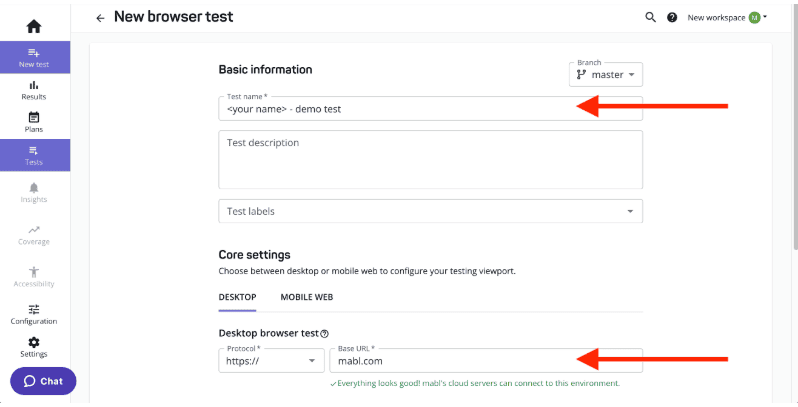

Mabl positions itself as an intelligent automation platform with AI-driven features aimed at reducing flaky failures and cutting down maintenance overhead. It’s designed to fit cleanly into CI workflows, with a focus on helping teams ship faster without constantly babysitting their test suite.

Screenshot of Mabl’s browser test creation interface

Where Mabl tends to do well is in stabilizing automation over time, especially in teams already using automated testing in CI/CD pipelines. It emphasizes practical intelligence: detecting change, surfacing drift, and keeping tests running as applications evolve.

The tradeoff is that teams still need governance. If AI-driven updates happen without strong review habits, you can end up with tests that pass while slowly drifting away from the original intent.

Choose this if: You want a CI-friendly automation platform that leans into AI to reduce flakiness and maintenance overhead.

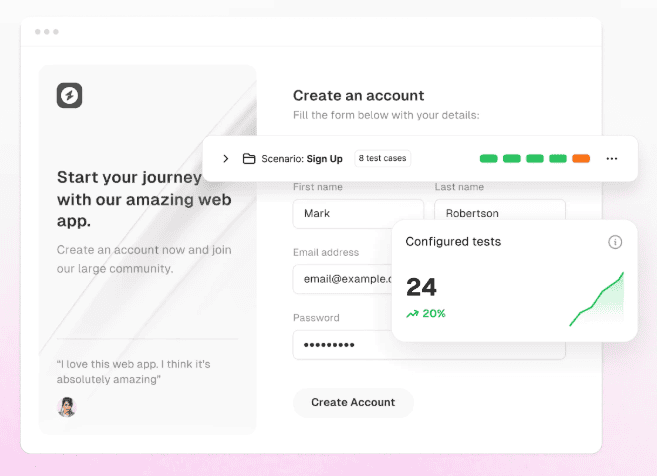

3. Momentic

Momentic is part of the newer wave of AI-first QA automated testing tools built around fast test authoring and AI-assisted maintenance. The platform leans into plain-language and assisted workflows designed to reduce setup friction and speed up early automation.

The appeal is speed and modern ergonomics, meaning the tool fits the user’s workflows, rather than the other way around. This is especially valuable for teams that want to move quickly without building a complex code-based testing framework. Momentic generally fits teams that are comfortable with AI doing more of the translation work between intent and execution.

The tradeoff is standard for AI-forward systems: Teams should evaluate how predictable execution is over time and how much visibility they retain into what the system changed and why.

Choose this if: You want a modern, AI-forward tool focused on fast ramp-up and assisted automation, and you don’t mind sacrificing some visibility.

4. QA.tech

QA.tech centers its platform around AI agents that explore and validate application flows. Instead of relying heavily on structured, deterministic test scripts, teams prompt the system and allow AI to navigate the product autonomously.

This can significantly reduce upfront authoring work and surface coverage quickly. The system behaves more like an exploratory AI tester than a traditional test suite.

The tradeoff is predictability. Because runtime AI interpretation plays a central role, coverage boundaries can feel less explicit. Execution may not always be strictly deterministic, and teams must review AI-driven outcomes rather than defining fixed validation paths. For some teams, that flexibility is a benefit. For others, it introduces ambiguity into release decisions.

Choose this if: You prefer AI agents exploring your app autonomously and are comfortable reviewing AI-driven results instead of maintaining structured, deterministic test cases.

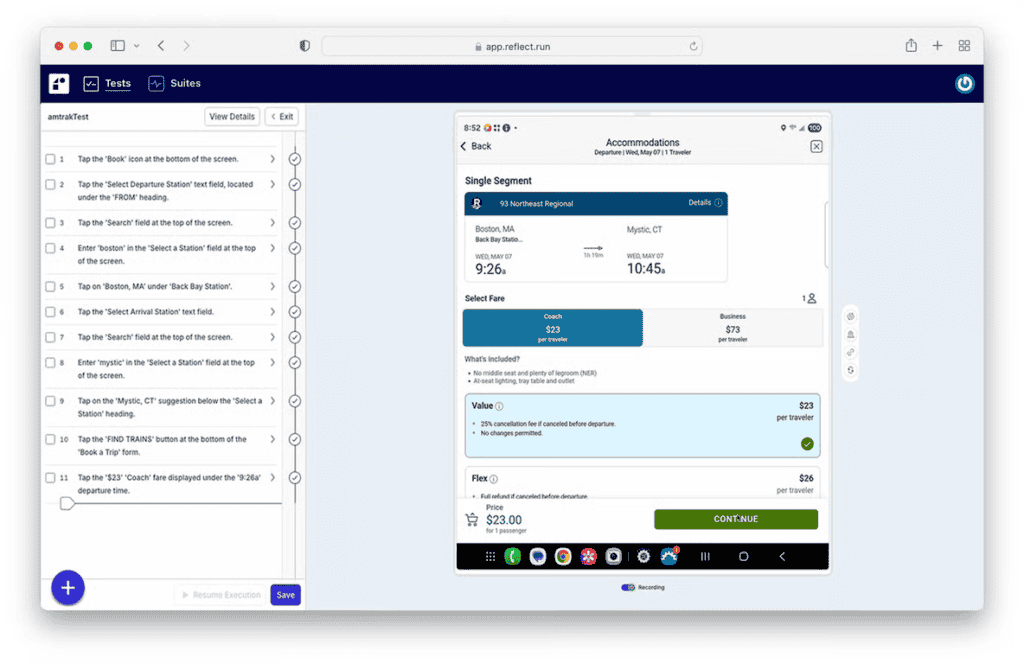

5. Reflect

Reflect is a lighter-weight automation platform oriented around simplifying browser testing with AI-assisted creation and maintenance. The goal is to reduce setup friction and make it easier for teams to get coverage without standing up a complex testing stack.

Its strength is approachability: smaller teams can get started faster, and AI helps smooth over some of the classic maintenance pain around changing UIs.

The tradeoff is depth. Teams with complex environments, advanced assertions, or strict governance needs may outgrow lightweight platforms quickly.

Choose this if: You want a simple way to get browser automation running quickly without heavy infrastructure.

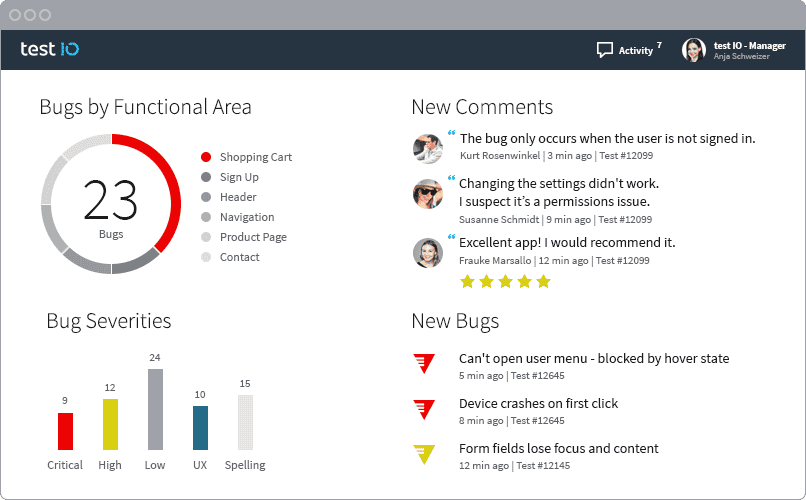

6. Test.io

Test.io is fundamentally different from pure automation platforms: it’s a managed testing service powered by a large tester network, with AI-assisted tooling layered around reporting and triage. It’s less “generate automated tests” and more “get real-world coverage without staffing it internally.”

Its strength is human coverage at scale, which can be especially useful for exploratory testing, device diversity, and scenarios that automation doesn’t catch well. (Though it’s worth noting that tools like Rainforest can now use AI for exploratory automated testing to generate a suggested test plan as a starting point.)

The tradeoff is that it’s not a deterministic automation suite living inside your CI pipeline. You’re buying ongoing service capacity, not just software.

Choose this if: You want human-driven testing coverage as a service, especially for exploratory and device-heavy validation, and don’t mind paying for capacity.

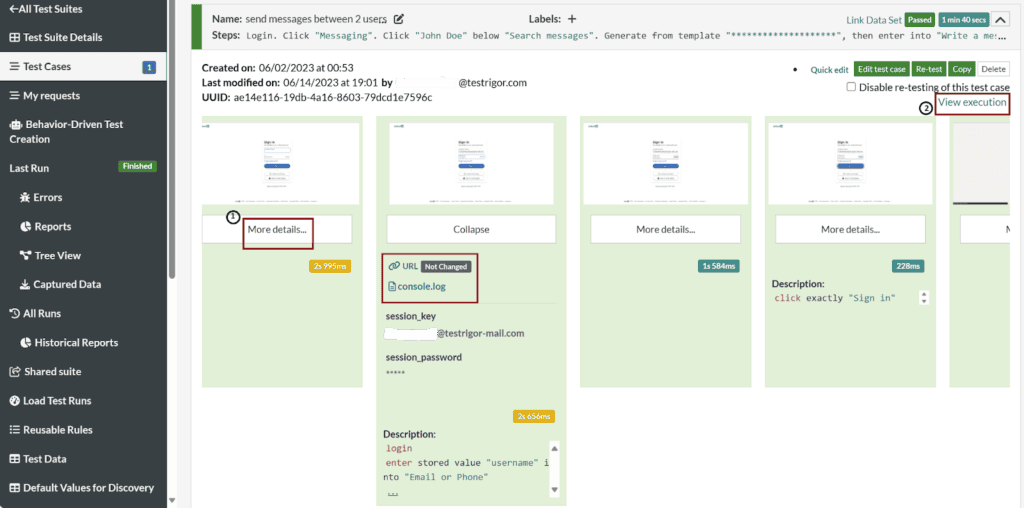

7. testRigor

testRigor’s core idea is simple and bold: Write tests in plain English and let the system interpret and execute them. It positions this as an accessibility breakthrough, with fewer scripts, fewer selectors, and more intent-driven automation.

It also leans enterprise, including support for ERP ecosystems like Salesforce, Workday, and Oracle, which makes it a common contender in larger organizations’ QA tool evaluations.

The tradeoff is that abstraction cuts both ways. When edge cases appear, debugging can feel opaque until teams learn the platform’s specific conventions and guardrails.

Choose this if: You want intent-driven automation in plain English, especially in enterprise and ERP-heavy environments, and don’t mind a steeper learning curve.

8. Functionize

Functionize positions itself as an enterprise AI automated testing platform with strong self-healing capabilities. Its AI adapts to UI changes automatically, aiming to minimize broken tests and reduce maintenance overhead at scale. The platform emphasizes resilience in fast-changing applications and markets its AI as a “digital workforce” operating alongside QA teams.

For large organizations with complex, evolving UIs, this maintenance automation can be compelling. AI-driven adaptation can significantly reduce the manual effort required to keep large test suites running.

The tradeoff is governance. When tests automatically adapt to changes, teams need strong visibility into what was modified and why. Without review processes, there’s a risk that tests evolve in ways that technically pass but no longer validate the original intent. AI that heals everything isn’t always what you want, especially when something should fail.

Choose this if: You’re an enterprise team that wants aggressive self-healing and you have the oversight to prevent test drift.

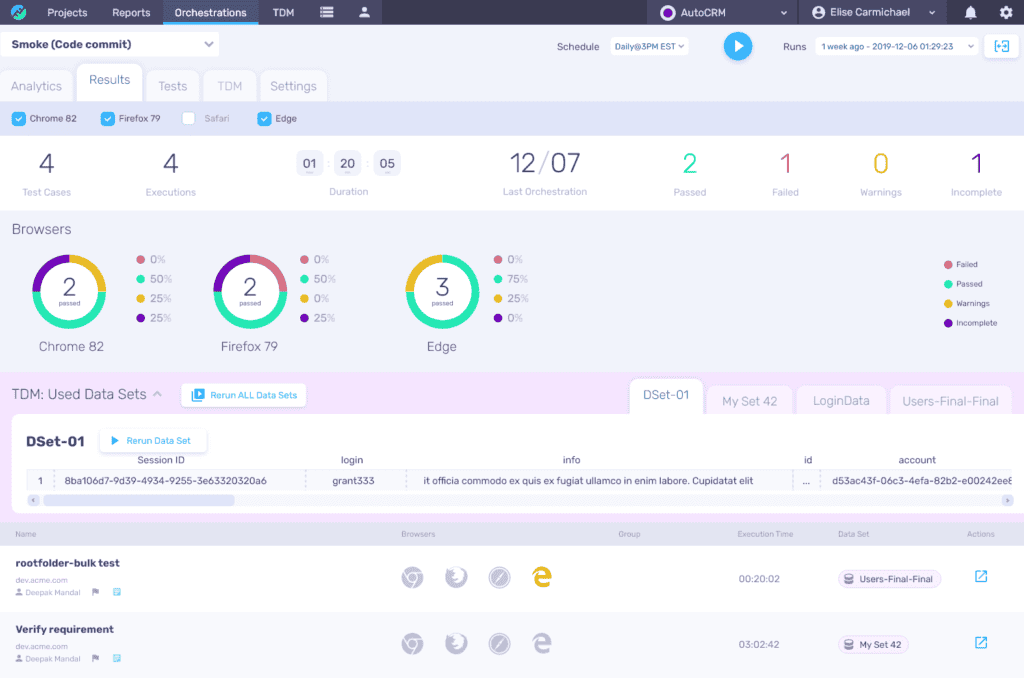

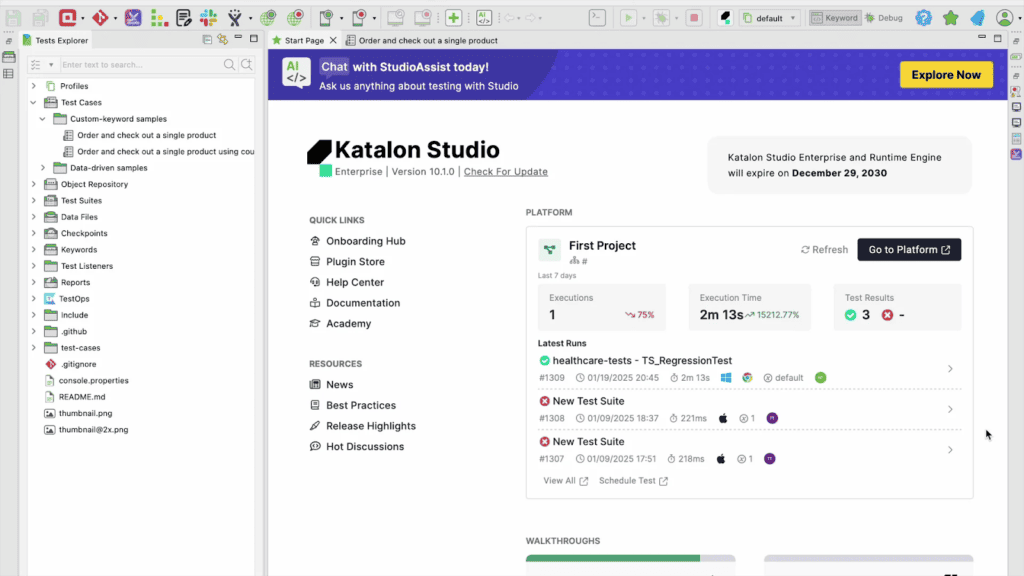

9. Katalon Platform

Katalon is a broader QA automation suite spanning web, API, mobile, and desktop testing. It includes AI-assisted capabilities, but its core value is coverage breadth and workflow flexibility across different testing types.

It’s often attractive for teams that want one platform to manage multiple channels and prefer a mix of low-code and code-based options.

The tradeoff is that broad suites can add complexity. Teams should evaluate how much of the platform they’ll truly adopt versus what becomes shelfware. Additionally, it’s worth noting that a platform like Katalon will preclude non-technical team members from helping with QA.

Choose this if: You need broad coverage across web, API, and mobile, and want a single suite to manage multiple test types. You don’t mind QA being code-based and having a team of QEs or other technical staff to manage it.

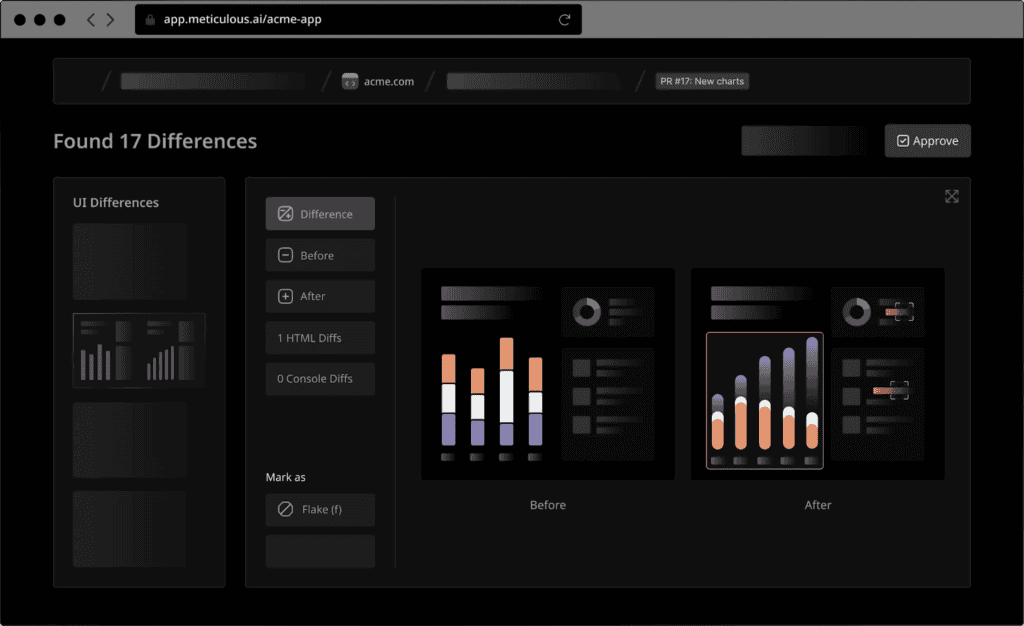

10. Meticulous

Meticulous takes an autonomous regression approach: Don’t write tests at all. It records real user sessions and replays them against new builds to catch regressions, shifting effort from authoring to review.

The benefit is breadth. By learning from real usage, it can surface issues across flows that teams might never think to script.

The tradeoff is intent and interpretation. Teams still need to review diffs and decide whether a change is expected or a bug. That review overhead doesn’t disappear; it just changes shape.

Choose this if: You want broad regression coverage with minimal authoring and are comfortable reviewing diffs instead of managing test cases.

11. Testim

Testim blends low-code automation with AI-driven stability features, focusing on smart element identification and reduced flake. It also allows deeper customization for teams that want more control than pure no-code platforms provide.

Its strength is flexibility. Teams can start simple and extend when needed, while still benefiting from AI-driven resilience in locating and interacting with UI elements.

The tradeoff is consistency. Hybrid platforms often depend on how disciplined teams are about standardizing test patterns, ownership, and review.

Choose this if: You want a flexible automation platform with AI stabilization, plus the option to customize when low-code hits its ceiling.

When AI actually makes QA better

AI has fundamentally changed what’s possible in test creation and maintenance, but it hasn’t eliminated the need for judgment, clarity, or intent.

As this landscape shows, the real differences between tools aren’t about who uses AI. It’s about how they use it: whether AI clarifies signals or creates noise, whether it reduces ownership or just shifts it, and whether it preserves deterministic execution or introduces runtime ambiguity.

AI can generate tests. It can heal UI changes. It can accelerate triage. But it shouldn’t hide its decisions, silently force-pass failures, or encourage teams to create more tests than they can justify or maintain.

As the barrier to creating tests gets lower, the importance of human judgment is more important than ever.

The best AI-enhanced automated testing tools don’t promise to replace QA teams. They give teams leverage by automating the grunt work, preserving trust in results, and making it easier to ship with confidence as products and release cycles accelerate.

If you’re ready to explore Rainforest QA’s AI-accelerated features, let’s chat!