A playbook for operational resilience

AI is now embedded across the entire software development lifecycle.

Developers use it to generate code. Product managers use it to prototype features. Teams use it to move from idea to deployment faster than ever.

Code moves faster. Features ship more frequently. Iteration cycles shrink. Across industries, companies that embrace this speed have a distinct competitive advantage.

But in highly regulated industries, including financial services, speed can’t come at the cost of quality.

A note on terms: What we talk about when we talk about finserv

In this blog, “financial services” (finserv) is used as an umbrella term covering all organizations that build finance-related apps, including traditional banks (e.g., Chase, Bank of America), neobanks (e.g., Chime, Revolut), investing platforms (e.g., Robinhood, Stash), and fintech apps for businesses and consumers (e.g., Qualpay, Intuit, Klarna, Coinbase). “Fintech” in this blog specifically refers to technology-first companies that build digital products related to financial services (e.g.,Chime, Klarna, Robinhood, etc.)

Financial services software testing: The stakes are high

In finserv, the cost of failure isn’t just a support ticket. One UX bug might not be a big deal, but if poor user experience becomes a pattern, it can easily translate to financial losses, reputational damage, and long-term erosion of customer trust.

If the worst-case scenario plays out and a bug impacts financial well-being, exposes data, or otherwise harms customers or partners, regulators won’t hesitate to get involved. This can cascade into expensive lawsuits, fines, and PR nightmares.

So while shipping a bug here and there by accident may not be a huge deal for an online game or informational web app, finserv by nature has an entirely different risk tolerance.

Moreover, customer expectations of financial companies have never been higher. Research shows that 74% of users expect finserv companies to understand their needs and provide frictionless digital interactions. They expect accuracy in their balances, speed in their transactions, and zero friction in their workflows. And if they don’t get it, they can (and will) switch providers on a dime (pun intended).

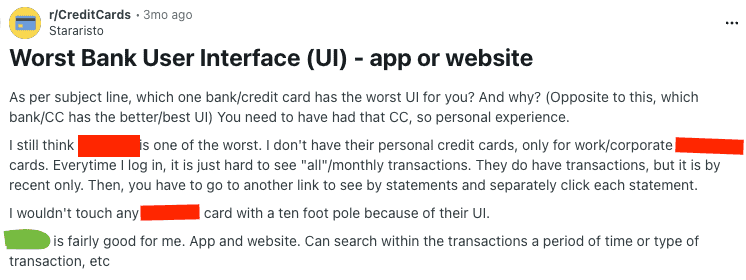

There are entire Reddit threads dedicated to complaining about user experience on finserv apps, like this:

With sites like Reddit increasingly influencing search and AIO results (and thus consumer and business decisions), there’s no hiding from user experience and interface blunders like these.

Even B2B finserv customers expect consumer-grade UX.

AI accelerates software development. But acceleration without quality control increases exposure. For financial services organizations, it’s can’t just be about faster releases. It must be about better, faster releases.

“Being able to catch [bugs] before they roll into production and interrupt your customers’ ability to use a service they are paying for is a huge win.” – Wendy Rice, Product Analyst at Qualpay, a Rainforest customer

The goal is operational resilience: the ability to ship safely and in compliance, even amidst the AI-driven acceleration of software releases.

The hidden cost of AI-enabled speed in finserv software testing

AI introduces new categories of risk that aren’t always obvious. A test may “pass” because the system found a workaround that no real user would ever take. A recommendation engine might suggest a dependency upgrade that looks legitimate but hasn’t been fully validated. A test suite may report clean results, while quietly missing edge cases in financial apps’ logic.

These failures slip through undetected. In finserv apps, that’s where the real danger lies.

A rounding issue in currency conversion. A subtle miscalculation in fees. A reporting screen that updates incorrectly under certain conditions. None of these may break your UI tests. But all of them can create financial, regulatory, and user trust consequences. Risk like this often becomes visible only after damage is done.

The danger isn’t just in deploying faulty code. It also lies in trusting QA signals you can’t fully explain. No financial services app can afford to make shipping decisions based on outputs that aren’t reproducible or defensible.

In financial services, you simply cannot accept that level of ambiguity and risk.

Manual QA testing is still the norm

Although many financial services organizations leverage automation, manual testing remains common, especially for workflows considered “too important” to leave entirely to automation.

In fact, according to a recent CapGemini report on quality outcomes in financial services, automation is still lagging in finserv. Nearly half of organizations are still in the planning stage of automation, and on average only cover a third of their test cases with automation.

On a more hopeful note, according to the same report, almost all finserv organizations surveyed (95%) use Gen AI for test data generation. But this is not well-integrated: Only 10% is embedded into the full SDLC.

Financial services software testing around payments, authentication, financial record updates, and compliance-sensitive reports often still receive human review. Taking extra care with these workflows reflects just how high-stakes QA is for financial services.

But manual-heavy QA models introduce their own pressure and risks. Regression cycles can stretch. Releases can slow down. In some cases, as belts tighten, teams are asked to validate more change with the same headcount. This can lead to burnout. And familiarity with the product can make it hard for human testers to spot issues.

So while it makes sense that the financial services industry still relies heavily on manual testing, there’s a good case to be made for increasing test automation steadily and thoughtfully. Done correctly, this should improve quality outcomes while also enabling release speed.

Moreover, for the financial services organizations whose apps still lack significant release coverage, test automation can extend coverage to the 95%+ that highly regulated industries must aim for.

The overburdened QA team problem

When developers ship faster thanks to AI, QA is often asked to test more, faster, often without proportional increases in headcount. The objective of automated financial services software testing and AI-enhanced QA should be to provide relief for overburdened QA teams while improving quality outcomes.

QA leaders are often facing pressure from all angles. Headcount is tight, and hiring is slow, while development velocity only continues to increase with AI. Automation frameworks are expensive to build and difficult to maintain, but leadership still expects shorter release cycles.

AI-enabled test automation can help relieve some of the pressure by reducing repetitive and manual workflows, accelerating test creation for new features, and surfacing meaningful failures (not noise).

QA AI and automation, when applied thoughtfully, can handle repeatable regression paths and maintain consistent coverage as the product evolves. That frees human experts to focus on edge cases, complex financial logic, and the risk-heavy decisions that truly require judgment.

In that model, AI elevates QA, instead of replacing it.

QA automation in finserv must be defined and transparent

If QA is the stabilizing force in an AI-accelerated SDLC, then the automation behind it cannot be opaque. In financial services, AI in QA must be deterministic, explainable, and reproducible.

Deterministic, because financial workflows cannot rely on randomness. A payment flow, a fee calculation, or a permissions check must follow a logical, predictable path. The same inputs should produce the same outcome every time. Silent or inconsistent behavior is not acceptable when money and trust are involved.

Explainable, because a green checkmark without context doesn’t hold up under scrutiny. QA teams must be able to understand how a test result was reached, what path was taken, and what assumptions were embedded in the test. If you can’t explain it internally, you certainly can’t defend it to a regulator.

Reproducible, because when something fails (or worse, when something passes silently that shouldn’t have), you need to replicate the behavior, diagnose it, and document it. Reproducibility is what turns testing into evidence of controls.

When evaluating AI testing tools in finserv, the real questions go beyond feature lists. You need to know which parts of your product are covered, where gaps exist, and if you can review artifacts that show exactly what happened during a run. It’s essential to understand if the system follows consistent logic (or if it improvises) and whether or not the tool reduces noise and increases signal quality.

That pressure is why operational resilience has become a board-level priority.

Choosing vendors as a highly regulated industry

Transparency expectations extend beyond internal processes. They shape the relationship between finserv companies and their vendors. Regulations inform how companies handle their customer data, such as SOC 2 compliance. If a QA platform produces results you cannot interpret or defend, you must own that risk.

Rainforest’s approach is built with that reality in mind.

Deterministic AI ensures that tests follow structured, logical user paths. There is no randomness in how core workflows are validated. A failure reflects a genuine issue. A pass reflects the intended experience.

Visual logs provide defensible evidence. Teams can see what happened during a run, not just whether it passed. That visibility supports audit readiness and internal confidence.

A human-in-the-loop model ensures that automation accelerates testing without removing oversight. AI handles repeatable regression at scale. Humans evaluate outputs and make informed risk decisions about complex or sensitive scenarios.

Automation reduces human error in stable, repeatable paths. Human expertise focuses on business-critical judgment.

In QA for finserv, the combination of deterministic automation, visible evidence, and human oversight is what turns AI from a source of invisible risk into a center of operational resilience.

QA at the center of operational resilience

As AI accelerates development, QA is shifting left, from the end of the release cycle to the center of strategic risk decisions. QA teams are no longer just the final gate before deployment, responsible for executing regression checklists.

Today, QA has new responsibilities, including interpreting automated signals from AI-driven tools and determining whether a “pass” truly reflects a safe user experience. Teams translate technical outputs into risk assessments that leadership can stand behind to support audit readiness with clear, reproducible evidence.

That shift puts QA at the center of operational resilience.

Operational resilience, in this context, means more than uptime. It means maintaining quality control, transparency in testing, and trust in releases while development velocity increases. QA becomes the function that ensures acceleration does not compromise accountability for financial services apps.

Practical playbook: How to speed releases without lowering the bar

Step 1: Define critical flows and automate your smoke suite

Start by identifying the workflows that simply cannot fail. These are the flows where a defect would create financial loss, regulatory exposure, or an immediate hit to customer trust.

For most finserv teams, that includes:

- Payments, refunds, and payouts

- Login, MFA, and session management

- Fee, tax, and currency calculations

- Financial record creation and updates

- Reporting and transaction confirmations

These flows form your smoke suite. They should run consistently, automatically, and on every meaningful change. The goal at this stage is fast, reliable validation of the paths that protect money, access, and trust.

Step 2: Expand outward and split repeatable flows from judgment-driven flows

Once your critical paths are covered, expand into secondary and tertiary user journeys. As you do, separate what is easily repeatable from what requires context and institutional knowledge.

Automate what is stable and predictable:

- Core regressions that behave the same way each run

- Established user journeys with clear expected outcomes

- Deterministic happy paths

Keep manual or human-supported testing for areas that demand judgment:

- Complex policy or rules updates

- Novel UX changes that affect behavior

- Edge cases shaped by business nuance

- Scenarios where context determines whether something is truly “correct”

Automation should handle what can be consistently validated. Humans should focus on where interpretation, risk assessment, and product understanding matter most.

This progression builds coverage in layers. First, protect what must not break. Then automate what is repeatable. Preserve human attention for what requires expertise.

Step 3: Make failures actionable

Ensure that when something breaks, your testing system produces clear, reproducible evidence that enables fast diagnosis and defensible decision-making.

Require:

- Clear logs and artifacts

- Deterministic execution

- Easy reproduction

Avoid:

- Silent passes that mask issues

- Randomized paths that hide logic flaws

- Black-box outputs without context

Step 4: Add maintainable coverage

In many finserv organizations, automation coverage has grown slowly, thoughtfully, and deliberately. Manual oversight has often remained an important safeguard for critical workflows.

As you expand coverage, build in a way your team can realistically sustain, prioritizing clarity and release confidence over raw test volume. The goal is not to chase 100 percent automation, or even 100% coverage. (You should only test what matters.)

The real goal is to strengthen software quality and release speed in a way that remains stable, understandable, and defensible over time.

Focus on:

- Reducing production surprises

- Shortening regression cycles

- Increasing confidence in release decisions

- Maintaining clarity around coverage gaps

Financial services software testing demands structure, clarity, and intention to enable speed. Focus AI use where it adds consistency, and use human judgement where it adds context. This will accelerate releases without compromising operational resilience.

How AI for QA reduces risk and strengthens resilience for financial apps

There are a lot of reasons to adopt AI for QA as a financial services company that produces web apps, but the core objective is to improve quality outcomes without slowing down releases.

AI supports that objective by reducing manual repetition and lowering human error in stable workflows, increasing coverage visibility, and speeding up test creation. Human expertise remains central: QA professionals evaluate outputs, interpret signals, and make defensible decisions about risk.

In finserv, operational resilience means continuously reducing risk while maintaining or increasing release velocity.

When applied intentionally, AI and automation in QA enable that balance.

Want to learn more about how Rainforest helps its fintech and finserv customers move faster without breaking things?