CircleCI

Learn how to configure CircleCI to run your Rainforest tests.

Overview

CircleCI is a hosted continuous integration (CI) server. Using the Rainforest CLI, it’s easy to configure CircleCI to run your Rainforest test suite after each deployment.

See our CI Sample Repository on Github for a more thorough explanation of how you can incorporate Rainforest tests into your release process for CircleCI, Jenkins, and Travis.

If you have CircleCI set up already, check out our CircleCI Orb to easily integrate Rainforest tests with your CI flow.

The Flow

Our project is configured to provide a continuous delivery flow by default.

Every time code is pushed to the develop branch, CircleCI:

- Runs the automated tests.

- Deploys the

developbranch to a staging server. - Runs the Rainforest tests using the CLI on the staging server.

- Once the Rainforest tests are successful, CircleCI pushes the

developbranch to themasterbranch. - The previous step triggers another CI build, which pushes the code to production.

If any step fails, the build fails. This prevents the deployment of broken code to your users.

Getting Started with CircleCI

For the whole picture, we encourage you to consult CircleCI’s Getting Started documentation.

The Config.yml File

The primary way to configure the CircleCI tool is to store a file in your git repository. You can see the sample configuration for our project here.

Notice that instead of entering the Rainforest API key directly in the config.yml file, we use environment-specific test data. We do this for security reasons. We don’t want to expose sensitive information to any reader of the file, especially when the repository is public. For more information, see Using Test Data.

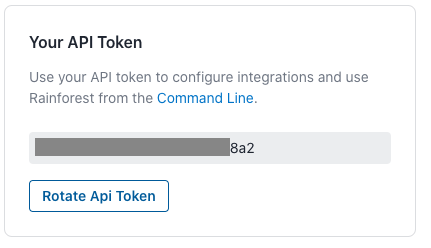

Configure test data in your project’s Test Data settings. You can find your Rainforest API token by navigating to your Integrations settings.

Your Rainforest API token.

Create a RAINFOREST_TOKEN environment variable in CircleCI corresponding to your test data, and you’re done. For more information, see CircleCI’s Using Environment Variables.

Testing Your Setup

You can test your setup by committing a change to your develop branch and pushing it to your git repository. CircleCI picks up the change and deploys your code. With a new continuous delivery flow and QA process, you can move fast while not breaking anything.

Note: If you don’t see an API token in your Rainforest settings, click the Rotate API Token button to generate a new one.

If you have any questions, reach out to us at [email protected].

Updated 6 months ago